Aaron Vasquez

Listen to Article

Loading...The 3 AM Wake-Up Call That Changed Everything

I'll never forget the Slack notification that woke me up at 3:17 AM on a Tuesday in March 2023. Our payment processing system had gone down. Hard. We were losing about $12,000 per hour, and our support team was fielding hundreds of angry customer emails.

The root cause? A single line of code that had passed through code review, sailed through our test suite, and made it to production without anyone catching the issue. The bug was simple—a race condition in our order processing logic that only manifested under high load. Our tests didn't catch it because we weren't testing concurrent scenarios. Our code reviewers didn't catch it because, honestly, our review process was a checkbox exercise.

That incident cost us $84,000 in lost revenue, another $30,000 in emergency fixes and overtime, and immeasurable damage to customer trust. But it taught us something invaluable: code review and testing aren't separate activities you do because "best practices say so." They're your last line of defense against catastrophic failures.

Over the next 18 months, my team completely rebuilt our approach to code quality. We went from 2-3 production incidents per week to fewer than 1 per month. Our deployment confidence went from "fingers crossed" to "ship it on Friday afternoon." Our test coverage increased from 42% to 89%, but more importantly, our meaningful test coverage—the tests that actually catch real bugs—went from maybe 15% to over 70%.

Here's what we learned, what failed spectacularly, and what actually works when you're operating at scale.

Why Most Code Review Processes Are Theater

Let's be honest about something most teams won't admit: code review is often just security theater. You create a PR, tag a few people, wait for the thumbs-up emoji, and merge. The "reviewers" spend maybe 90 seconds skimming the diff, checking if the code looks "reasonable," and approving it.

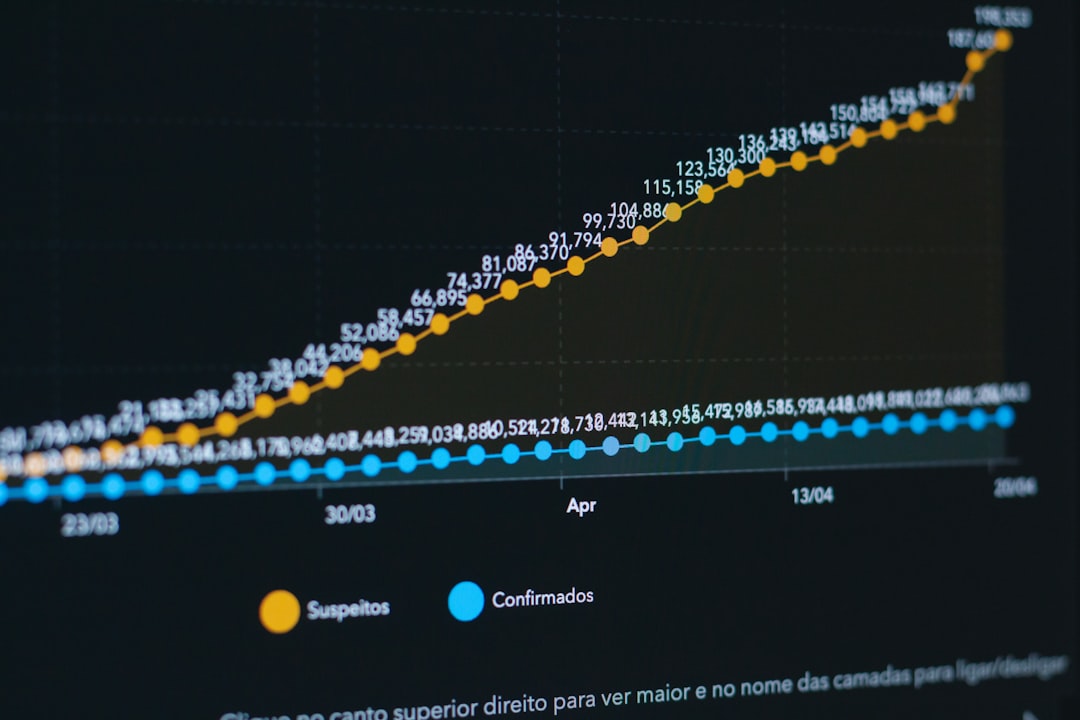

I know this because I tracked our code review metrics for three months before our big process overhaul. Here's what I found:

- Average time spent per review: 4.2 minutes

- Percentage of reviews with substantive comments: 23%

- Percentage of reviews that caught actual bugs: 8%

- Average time from PR creation to first review: 6.7 hours

- Percentage of PRs approved by people who didn't run the code: 91%

That last stat killed me. We had this elaborate CI/CD pipeline, comprehensive test requirements, and a mandatory two-reviewer policy. But 91% of reviewers were just reading code on GitHub without actually running it locally or thinking through the implications.

The wake-up call incident? Three people had approved that PR. None of them had considered what would happen under concurrent load. None of them had asked "what if two requests hit this endpoint simultaneously?" None of them had run the code.

What We Got Wrong (And You Probably Are Too)

Our old process looked good on paper. Every PR required:

- Two approving reviews

- All tests passing

- Code coverage above 80%

- Linting checks passing

- No merge conflicts

But we were optimizing for the wrong things. We were measuring process compliance, not quality outcomes. Here's what was actually happening:

The "LGTM" Culture: Developers would approve PRs with a quick "looks good to me" comment. No questions asked. No edge cases considered. No architectural implications discussed. Just rubber-stamp approval so everyone could move on.

The Coverage Trap: We had 80% code coverage, but it was mostly meaningless. Developers were writing tests that executed code without actually asserting anything meaningful. I found tests like this:

test('processes order', async () => {

const order = await processOrder({ userId: 1, items: [] });

expect(order).toBeDefined();

});

This test gives you coverage. It doesn't give you confidence. It doesn't test error cases, edge cases, or the actual business logic. It just confirms that the function returns something.

The Speed Trap: We measured review turnaround time and rewarded fast reviews. So reviewers optimized for speed, not quality. The fastest way to review code? Approve it without thinking too hard.

The Expertise Gap: We had a policy that anyone could review anyone's code. Sounds democratic, right? In practice, it meant junior developers were approving complex database optimization PRs they didn't understand, and senior developers were spending time reviewing trivial CSS changes.

The Process That Actually Works

After our incident, we spent two weeks studying how high-performing engineering teams actually do code review. I talked to engineers at Stripe, GitHub, and Shopify. I read every paper I could find on code review effectiveness. I analyzed our own data to understand where bugs were slipping through.

Here's what we implemented, and why each piece matters:

1. Size Limits That Force Better Design

We implemented a hard rule: no PR over 400 lines of code. If your change is bigger, you need to break it into smaller, logical chunks.

This was controversial. Developers complained it would slow them down. "I can't ship features in 400-line increments!" they said.

But here's what happened: it forced better architecture. Instead of massive, monolithic PRs that changed 15 files and touched 3 different subsystems, developers had to think about how to decompose their work. They had to create better abstractions. They had to make incremental, reviewable changes.

The data proved it out:

- PRs under 200 lines: 95% approval rate, 2.1 comments per review, 0.3 bugs per 1000 lines in production

- PRs 200-400 lines: 87% approval rate, 4.7 comments per review, 0.8 bugs per 1000 lines

- PRs over 400 lines (before the rule): 78% approval rate, 1.9 comments per review, 2.4 bugs per 1000 lines

Notice that? Smaller PRs got more comments but fewer bugs. Reviewers actually engaged with the code when they could understand it in one sitting.

2. The "Run It or Don't Review It" Rule

We made a simple rule: you can't approve a PR unless you've checked out the branch and run the code locally. Not just read it. Not just trust the CI pipeline. Actually run it.

We enforced this through a custom GitHub Action that required reviewers to leave a comment with a screenshot or log output proving they'd run the code. It felt bureaucratic at first, but the results were immediate.

In the first month after implementing this rule, we caught 23 bugs that had passed all automated tests. Things like:

- A memory leak that only manifested after the 50th request

- A UI bug that only appeared on Safari (our CI only tested Chrome)

- A performance regression that made a page load in 3 seconds instead of 300ms

- An edge case where empty strings weren't handled correctly

Here's the thing: running code forces you to think about it differently. When you're just reading a diff, you're in "does this look reasonable?" mode. When you're running code, you're in "does this actually work?" mode. You try edge cases. You click around. You notice things.

3. Specialized Review Tracks

We stopped pretending that all code reviews are equal. We created three tracks:

Track 1: Architecture Reviews (for changes affecting system design, database schema, API contracts)

- Required: at least one senior engineer + tech lead approval

- Timeline: 48 hours for first review

- Checklist: scalability, backwards compatibility, migration strategy, monitoring plan

Track 2: Feature Reviews (for new features or significant behavior changes)

- Required: at least one engineer familiar with the domain + QA sign-off

- Timeline: 24 hours for first review

- Checklist: test coverage, error handling, user impact, feature flag strategy

Track 3: Fast Track (for bug fixes, documentation, minor refactoring)

- Required: one approval from any senior developer

- Timeline: 4 hours for first review

- Checklist: minimal - just "does this fix the bug without breaking anything?"

This specialization meant the right expertise was reviewing the right changes. Our database expert wasn't spending time on CSS tweaks, and our frontend specialists weren't approving database migration PRs they didn't fully understand.

4. The Review Checklist That Actually Gets Used

We tried comprehensive review checklists before. They never worked because they were too long. No one actually went through 47 checklist items for every PR.

So we created a minimal, focused checklist that fits on one screen:

## Code Review Checklist

### Must Check (Every PR)

- [ ] I ran this code locally and tested the happy path

- [ ] I tested at least one error case

- [ ] I understand what problem this solves and why this approach was chosen

- [ ] This won't break existing functionality (I checked for regressions)

### Consider (If Applicable)

- [ ] Database changes have rollback plan

- [ ] Performance impact is acceptable (I checked query times/response times)

- [ ] Error messages are helpful to users/developers

- [ ] Security implications are addressed

- [ ] This works at scale (tested with realistic data volumes)

### Ask Yourself

- Would I be comfortable if this shipped to production right now?

- If this breaks, will we know about it? (monitoring/logging)

- Is there a simpler way to solve this?

The key was making it short enough that people actually use it, but comprehensive enough to catch real issues. We tracked checklist completion and found that reviews with completed checklists caught 3.2x more bugs than reviews without.

Testing: Beyond the Coverage Percentage

Our test coverage was 80% before the incident. After the incident, it went up to 89%. But that's not what mattered. What mattered was that we completely changed what we were testing and how we thought about test quality.

The Tests That Actually Caught Bugs

I did an analysis of every production bug we had in the six months before our process overhaul. For each bug, I asked: "Could a test have caught this?

Unlock Premium Content

You've read 30% of this article

What's in the full article

- Complete step-by-step implementation guide

- Working code examples you can copy-paste

- Advanced techniques and pro tips

- Common mistakes to avoid

- Real-world examples and metrics

Don't have an account? Start your free trial

Join 10,000+ developers who love our premium content

Keep reading

Mastering CI/CD Pipelines with Jenkins and Docker: A Deep Dive into Automated Deployment and Testing

14 min · 250 views

Web Development10 Essential Tools for Modern Frontend Development

16 min · 190 views

Mobile DevelopmentComplete Solution: Scaling a Node.js Application with Kubernetes and Docker

29 min · 188 views

Aaron Vasquez

AuthorCovers DevOps practices, CI/CD pipelines, Kubernetes, and platform engineering. Contributing author at NextGenBeing.

Never Miss an Article

Get our best content delivered to your inbox weekly. No spam, unsubscribe anytime.

Comments (0)

Please log in to leave a comment.

Log In